Recently, I watched an interview on the Pioneer Network YouTube channel titled “Do LLMs Understand? AI Pioneer Yann LeCun Spars with DeepMind’s Adam Brown.”

The conversation between Yann LeCun and Adam Brown was intellectually stimulating and, in the best traditions of academic disagreement, conducted with an admirable mixture of confidence and polite contradiction. Yet one leaves the discussion with a lingering suspicion that the debate, while technically sophisticated, remains somewhat incomplete.

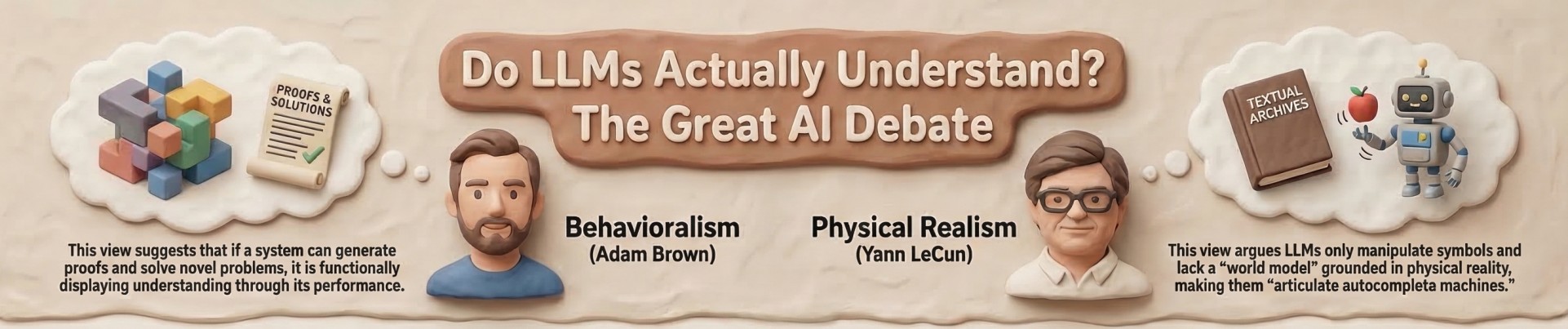

Both speakers attempt to answer a deceptively simple question: Do Large Language Models understand?

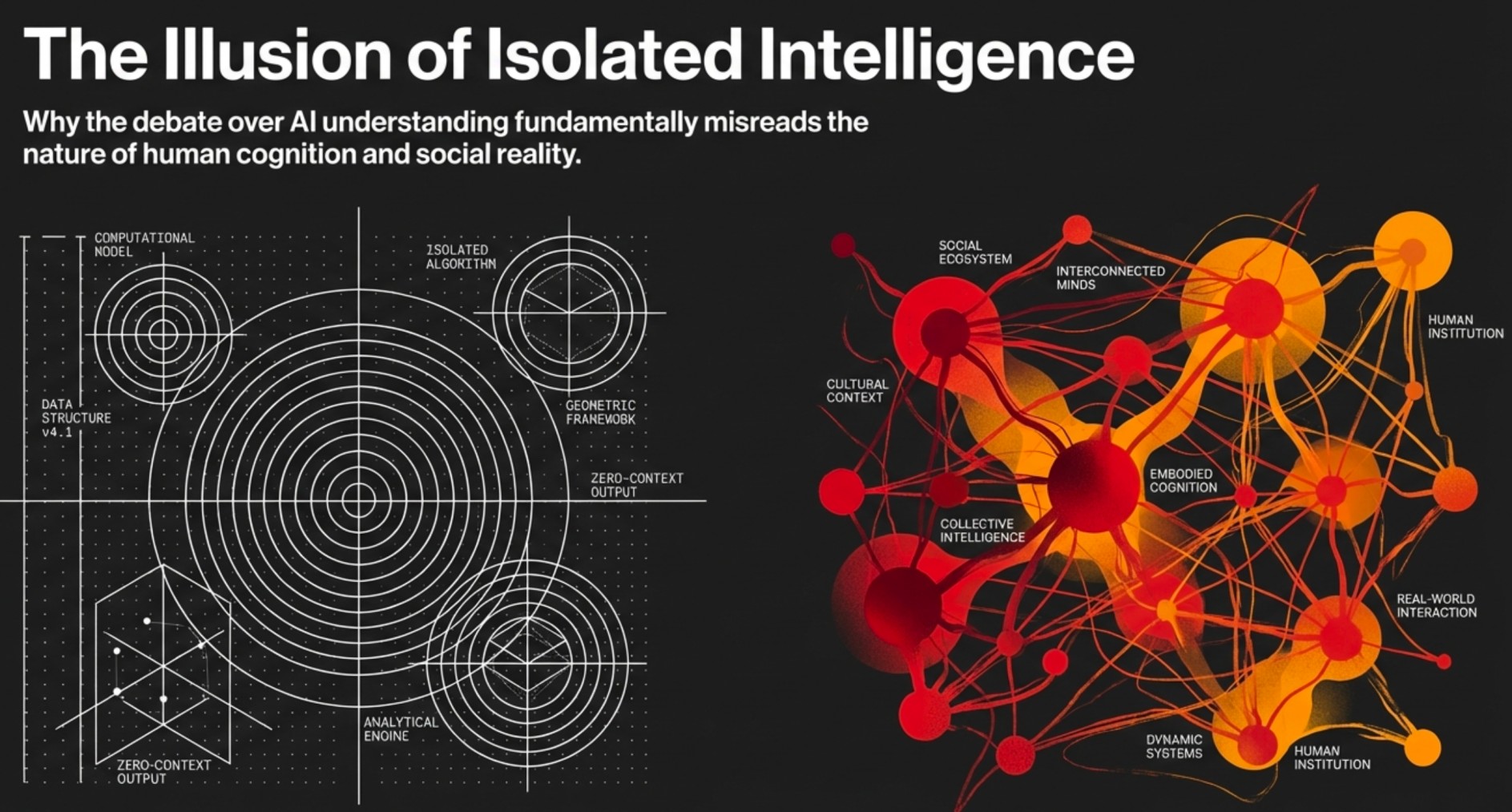

The answers offered oscillate between cautious optimism and principled scepticism. However, the entire discussion quietly assumes that intelligence is primarily a computational phenomenon. That assumption, I suspect, deserves a more thorough interrogation.

The Behavioural Test vs. The World Model

Brown’s position may be described as a behavioralist one. If a system can debunk misconceptions, generate proofs, and solve problems it has not explicitly encountered, then functionally speaking, it is displaying something that resembles understanding.

LeCun, meanwhile, plays the philosophical realist. LLMs, he argues, manipulate symbols without possessing a world model grounded in physical reality. They do not know the world; they merely rearrange descriptions of it.

On this point, LeCun is persuasive. Yet even his critique appears incomplete.

Human intelligence is not grounded merely in physical reality but also in social reality. Our understanding of the world is mediated through institutions: schools, laboratories, workplaces, governments, and markets. Intelligence emerges not simply from perception but from participation in structured systems of activity.

LLMs, by contrast, are trained on the textual residues of these systems rather than on the systems themselves.

They learn what humanity has said about the world, not how humanity actually operates within it.

Moravec’s Paradox, or the Curious Case of the Intelligent Dishwasher

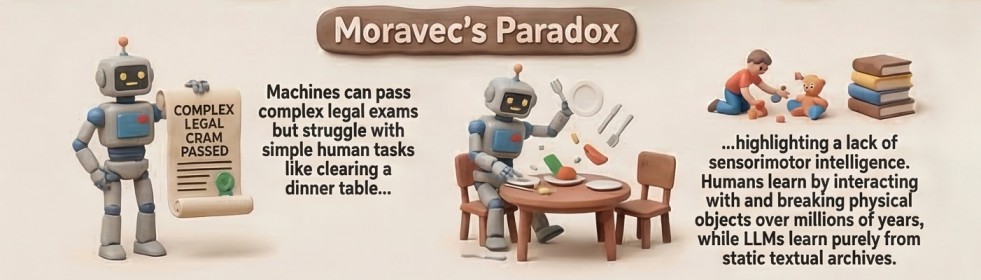

The interview also touches on Moravec’s Paradox, the familiar observation that tasks easy for humans' perception, movement, and common sense remain astonishingly difficult for machines.

AI can pass legal exams, yet cannot reliably clear a dinner table.

This paradox is often treated as a technical puzzle. In reality, it is perhaps better understood as an evolutionary one.

Humans possess sensorimotor intelligence refined over millions of years. A child learns to manipulate objects not by reading about them but by interacting with them, dropping them, breaking them, and occasionally being told not to do so again.

One suspects that if LLMs had mothers, teachers, and the occasional stern supervisor, their reasoning abilities might develop rather differently.

The Data Fallacy

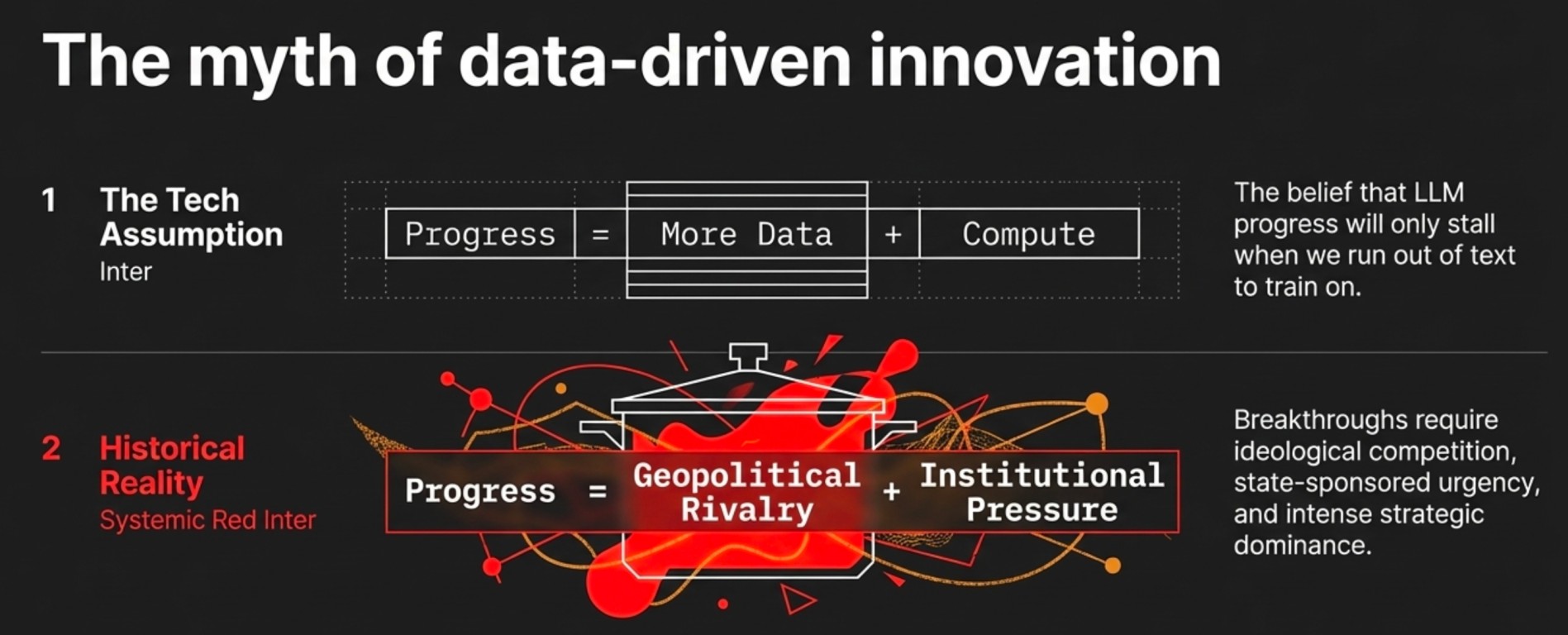

One line in the discussion particularly merits scrutiny, the suggestion that progress in AI may slow because LLMs are approaching the limits of what can be achieved through training on text alone.

Historically, major leaps in intelligence systems have rarely emerged from more data alone. They have emerged from interaction with reality, often under conditions of intense political or institutional pressure.

Consider the scientific advances during the Cold War.

The remarkable developments in rocketry, computing, and space exploration were not merely the result of researchers having access to more information. They were the result of ideological competition, geopolitical rivalry, and state-sponsored urgency.

The Space Race between NASA and the Soviet Space Program did not occur because scientists had discovered a particularly rich dataset on orbital mechanics. It occurred because two superpowers found themselves in a contest for technological prestige and strategic dominance.

Innovation, in other words, is rarely an isolated computational process. It is a socially organised endeavour shaped by incentives, conflict, and ambition.

LLMs, impressive though they are, exist outside such systems.

Intelligence Without Consequences

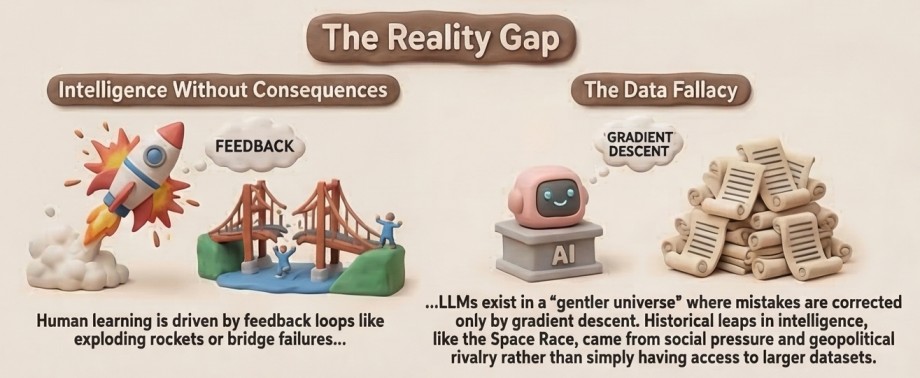

A defining feature of human intelligence is that it operates within environments where actions have consequences.

Scientists test hypotheses and discover they are wrong. Engineers build machines that occasionally fail spectacularly. Economists propose policies that sometimes produce the opposite of their intended effect.

Learning, in other words, occurs within feedback loops of success and failure.

LLMs inhabit a far gentler universe. Their mistakes are corrected through gradient descent rather than through exploding rockets, collapsing bridges, or angry voters.

This makes them extraordinarily capable textual tools but not necessarily participants in the messy ecosystem of real-world problem-solving.

The Labour Question That Nobody Mentions

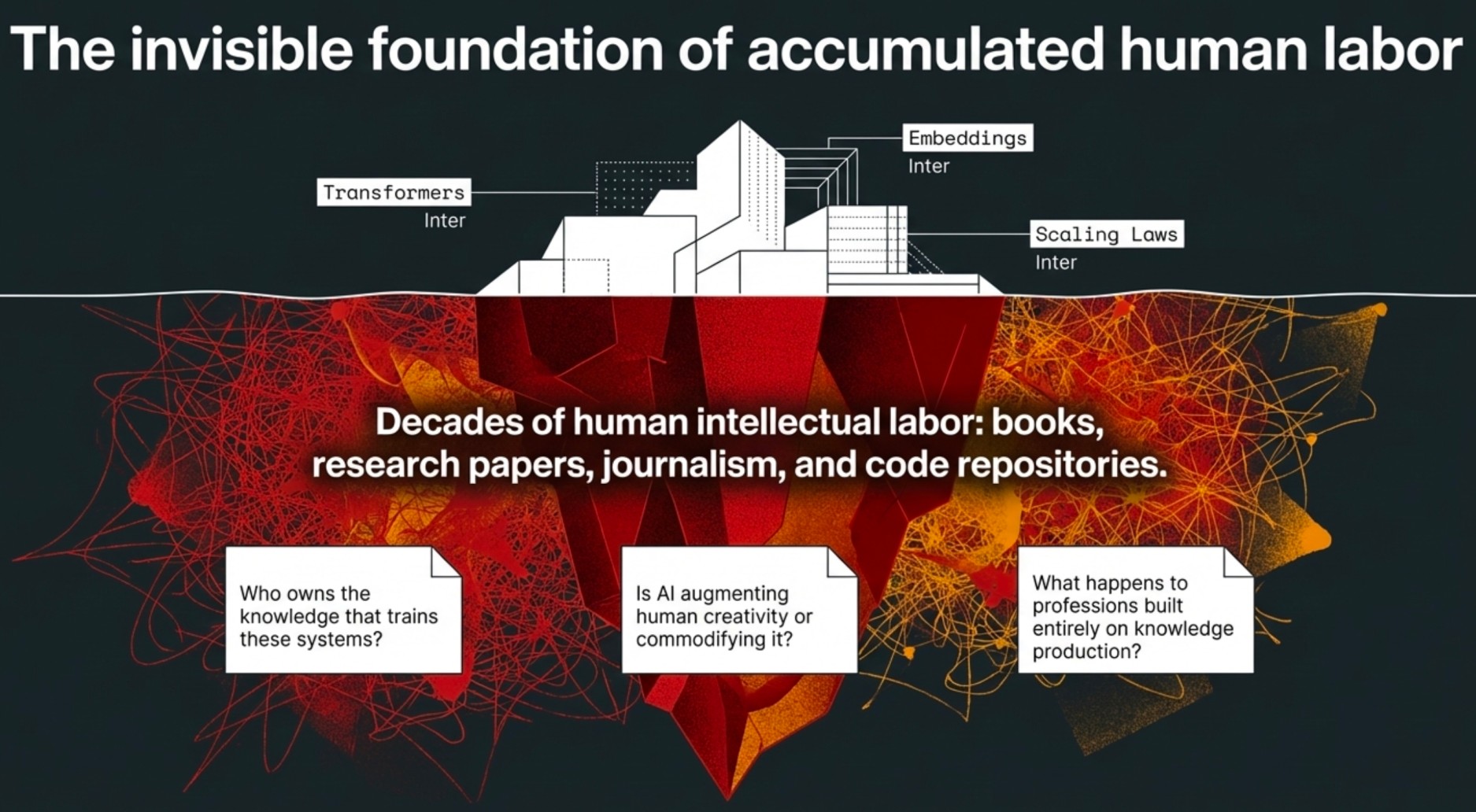

From the perspective of labour and institutional studies, one curious omission in the debate deserves mention.

Large AI models are trained on vast archives of human intellectual labour: books, research papers, journalism, programming repositories, and countless other artefacts produced by millions of individuals over decades.

The discussion often focuses on architecture transformers, embeddings, and scaling laws, while quietly ignoring the social reality that these systems are effectively trained on humanity's accumulated cognitive output.

This raises questions that are less technical but perhaps more consequential:

-

Who owns the knowledge that trains these systems?

-

Is AI augmenting human creativity or commodifying it?

-

What happens to professions built on knowledge production?

History suggests that technological revolutions tend to reorganise labour markets before societies manage to reorganise their institutions.

The AI revolution may prove no exception.

Open Source and the Politics of Intelligence

LeCun’s argument for open-source AI deserves serious attention.

If AI systems become the primary mediators of human knowledge, then control over them effectively becomes control over the flow of information itself.

Allowing a handful of corporations to dominate that infrastructure would be a remarkable concentration of epistemic power.

Open-source ecosystems offer a partial safeguard. Yet history reminds us that technology alone does not guarantee democratic outcomes. Institutional frameworks, laws, norms, and governance structures must evolve alongside it.

Otherwise, the future may resemble not an open knowledge commons but a rather elegant information oligopoly.

The Renaissance Narrative

Both participants in the discussion seem to share an optimistic vision of an AI-assisted renaissance in human creativity.

One hopes they are correct.

Yet technological history counsels humility. Printing enabled mass literacy—but also propaganda. Industrialisation expanded prosperity, but only after a century of labour reform and social conflict.

If AI does usher in a renaissance, it will likely arrive after a period of institutional turbulence, labour disruption, and policy experimentation.

History rarely delivers revolutions in neat packages.

A Modest Conclusion

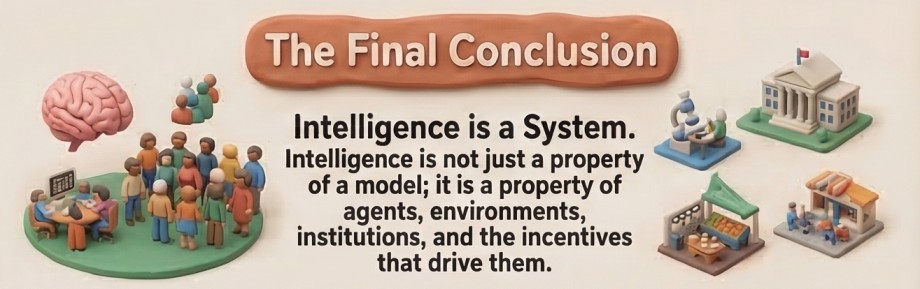

The debate between Yann LeCun and Adam Brown is illuminating precisely because it exposes the limits of our current thinking about artificial intelligence.

Yet the most interesting question may not be whether LLMs understand language.

The more profound question is whether we understand intelligence itself.

For intelligence, human or artificial, is not merely a property of models. It is a property of systems composed of agents, environments, institutions, and incentives.

Until AI participates in such systems, it will remain what it currently is a brilliant conversationalist, an occasionally unreliable mathematician, and perhaps the most articulate autocomplete machine humanity has ever built.

Which, one must concede, is already quite an achievement.

Comments (0)

Please log in or register to leave a comment.

No comments yet. Be the first to share your thoughts!